The Challenge of Data Integration

The Big Challenge of Data Integration

Drug discovery is based on analysis of complementary association between drugs and diseases existing in many data types. Integration of all data types in a single repository (data lake) is an important prerequisite for accuracy. The main challenges to integration, are the heterogeneity of a large number of data types and some data is stored in inaccessible silos. To address the problem of heterogeneity Iteru adopted an effective data extraction solution. It is based on using multiple text extraction methods depending on the type of data. It leverages technological solutions to discover and access data stored in different silos.

Iteru Data Sources

Iteru platform is based on accessing eight critical data types, six are: publications, FDA, lab, clinical trials, patient data and drug banks. Iteru will also include the results of analysis of the two multimodal data: genomics and digital pathology. In comparison, many competitors use a small portion of only 2 or 3 data types. Because of the time constraint and lack of resources, a proof of concept product, built by Iteru, includes samples of 4 data types: publications, FDA, lab and clinical trials. Other data types will be included after funding. Software for integrating all data types has already being built.

In subsequent years, in attempting to reach the target of 1 Petabyte of data, Iteru will seek collaboration with biomedical companies and publishers who may be willing to provide their biomedical data in exchange for Iteru services, for instance labeling their data.

Data and Cost Reduction Without Sacrificing Accuracy

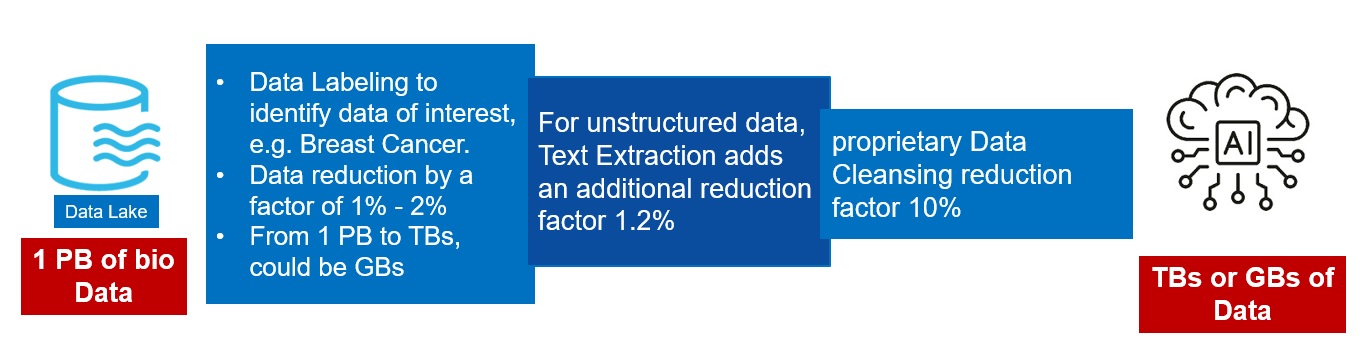

AI for one petabyte of data is challenging even for an expensive computer with a performance of 32 petaflops, for example Nvidia DGX H100 server. A high-end AI server costs between $200K -$300K. A company developing AI for petabyte of data needs multiple servers. In the cloud AI for 1 PB may cost up to $1.5M per year. To reduce computational cost, Iteru implemented a data reduction scheme without sacrificing accuracy.

Iteru's Data Reduction

Pronounced Reduction of computational cost

Out of 1 petabyte relevant data is extracted, causing data reduction by a factor of 1% – 2%, with an average of 1.5%. To clarify, no document covers all diseases. For instance, in PubMed out of its 37 million documents only 1.4% cover cancer (525,117). The reduction in storage, using the average of 1.5%, is from 1 petabyte to 15 terabytes. Data reduction to 15 terabytes greatly decreases the computational cost and increases accuracy as it removes statistical bias caused by irrelevant data. After computational reduction, there is no need for expensive servers. Servers priced at $20K – $40K satisfy our requirements.